Your Moonshot doesn’t have to be a Moonshot

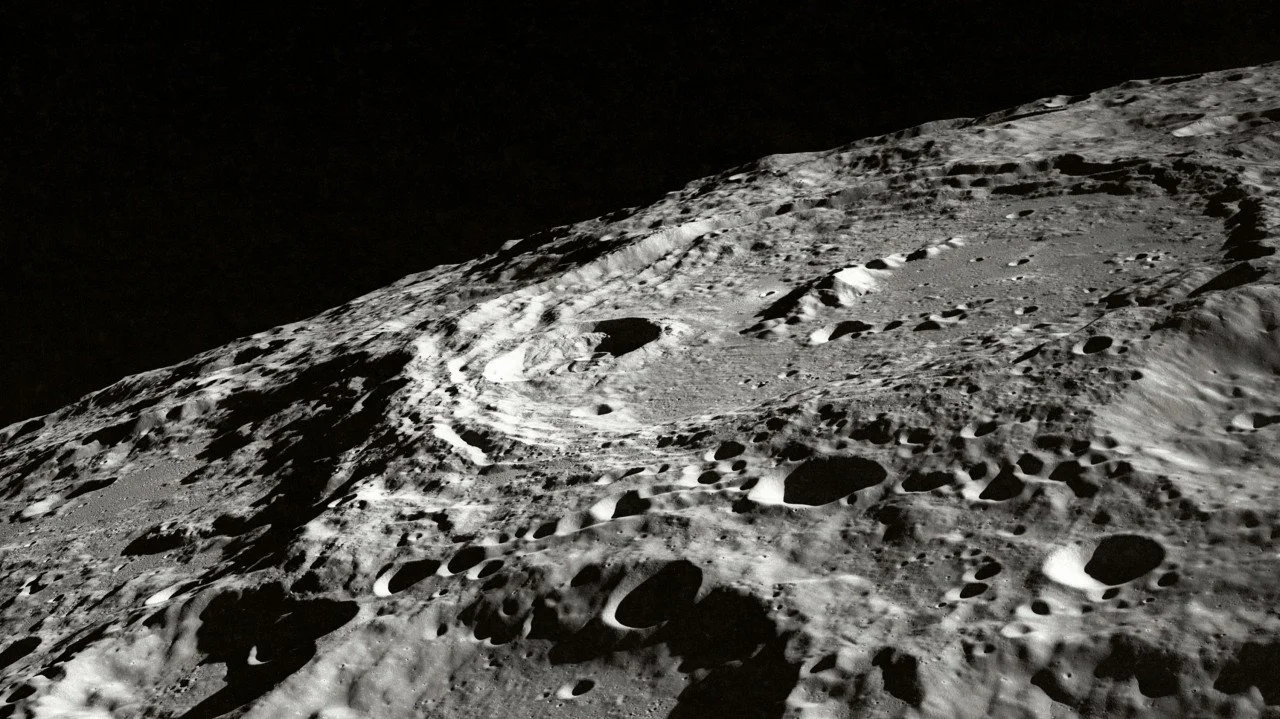

Photo credit: NASA via Unsplash

In 1962, NASA faced a difficult technology procurement choice.

They needed a guidance computer for the Apollo Moon missions. Did they go for a design based on new technology, working with researchers at MIT, or a design based on proven technology from their existing suppliers?

They chose the new technology: rather than discrete electronic transistors, they would use silicon chips, which combined multiple transistors into a single component. These chips weren’t like the chips of today, though: rather than millions or billions of transistors, they contained just a few transistors, each representing a single logic gate. Thousands of them were needed to build the Apollo Guidance Computer (AGC).

NASA chose chips because they were small and hard-wearing, capable of surviving the rigours of launch and the cosmic radiation of space. But they had never been used before to build a machine of such complexity and ambition. In 1962, it was a bold decision.

By 1969, when Apollo 11 landed on the Moon, silicon was a much more natural choice: chips existed which contained thousands of transistors and had become standard components for computers. When the Apollo missions ended in 1972, the microprocessor had been invented, and the chip effectively was the computer.

At the end of Apollo, the AGC, so advanced and forward looking in 1962, seemed antiquated and obsolete. It had succeeded in the astonishing task of navigating a crewed spacecraft to the Moon and returning it safely, but its time had passed. Commercial computers being used for routine business tasks were much more powerful.

Does this story sound familiar? Most of us don’t get to put people on the Moon, but we do build computer systems through projects and programmes that last years – sometimes so long that, by the time we have finished, the brand new technology which we picked at the start of the programme has been superseded and surpassed several times over. If you’ve ever been to a launch party where ‘upgrade to the latest version’ is at the top of the to-do list for the next phase, then you know the feeling.

Fortunately, it does not have to be this way. The Apollo mission had good reasons for not radically changing the design of the AGC in the middle of the programme (they did upgrade the chips in 1966, but they still only contained a handful of transistors and performed the same basic logic function). They had to pick solutions which would undergo years of rigorous testing, extending the limits of what people and technology had ever achieved before. The flight crew and ground crew had to learn the behaviour of every component so that they knew what to do when things went wrong: they could not learn a new interface and a new set of program codes in the middle of a mission.

We are not subject to the same constraints. We are not (usually) sending our systems into space, and we do not need to send our users on multi-year training programmes.

This means that, when we embark on ambitious initiatives – our equivalent of Moonshots – we should seek flexibility rather than rigidity. When we are making choices equivalent to that of NASA picking MIT and the silicon chip over their established suppliers and the discrete transistor, we should evaluate them not just on their capability today – but on their capacity for change.

This can feel disconcerting and distracting. Like so much in the world of technology, it is easy to feel that life would be easier if things would just stop changing all the time. But they won’t stop, and we do ourselves a dis-service when we wish that they would.

We should treat the continuous innovation and disruption of the technology ecosystem, not as a distraction, but as a continuous flow of opportunity and capability – and we should see figuring out how to take advantage of it as a fundamental aspect of our job. This starts right at the beginning when we choose what to build, what to buy and who to work with – and choose how we make those choices.